AI Grand Strategy Option 7: Networked Technological Leadership

All views in this newsletter are my own and do not represent the views of The R Street Institute, the US Navy, or any other organization I am affiliated with.

This is the seventh and final installment of the Grand Strategy for AI Competition series, which applies classical strategic frameworks to the question of how the United States should approach AI competition. Previous installments examined Preserve Democratic Technological Autonomy, Resilience Over Dominance, Competitive Pluralism, Technological Interdependence, Innovation Ecosystem Dominance, and Standards and Governance Leadership. The series has moved from defensive options toward increasingly offensive ones. This final installment examines the most demanding of the seven. It's the one where the gap between intent and execution is widest.

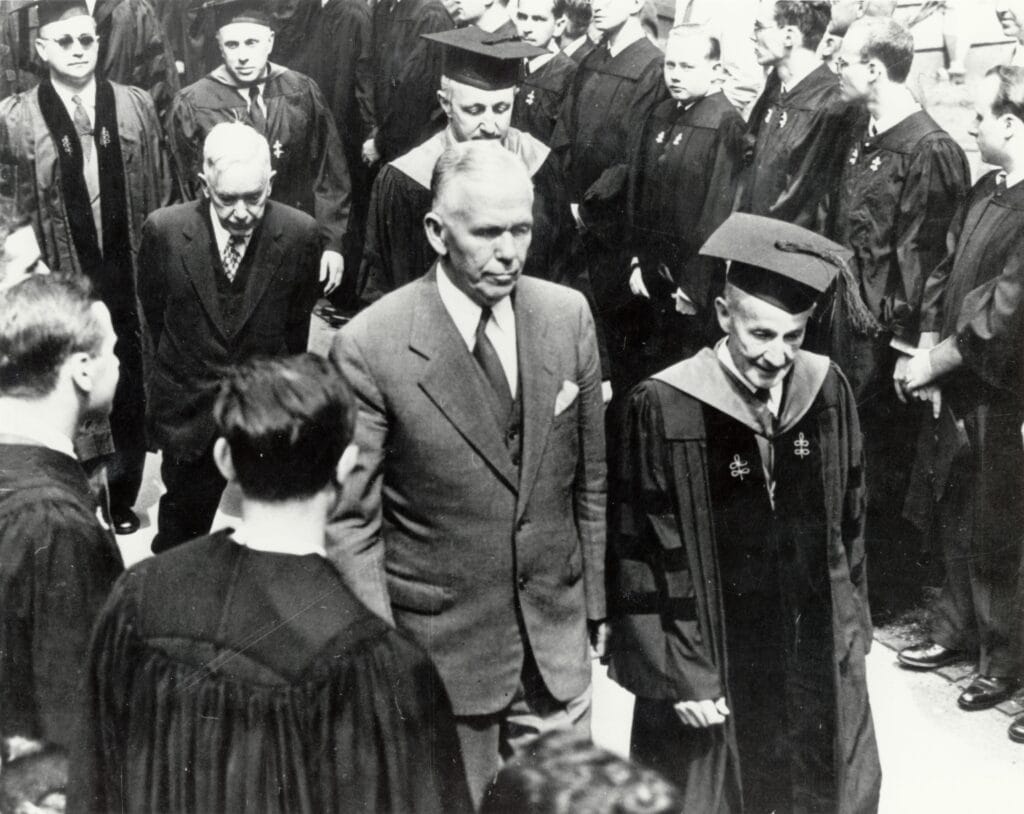

On June 5, 1947, George Marshall walked to a podium at Harvard's commencement ceremony and delivered a speech that ran under fifteen minutes. He read from notes, quietly, without theatrics. The speech proposed what would become the largest foreign assistance program in American history, and its core logic fit in a single sentence: "The policy is not directed against any country or doctrine, but against hunger, poverty, desperation, and chaos."

That framing was strategic architecture, not merely rhetoric. By refusing to name an enemy, Marshall constructed an offer that European nations, including those with significant communist parties, could all accept without humiliation. By tying American prosperity explicitly to European recovery, he made the program's self-interest legible and therefore credible.

The Marshall Plan worked not despite being a positive-sum effort, but directly because of it.

The logic of denial reached its apex under the Biden administration: the CHIPS Act, the Entity List, export controls targeting specific chip architectures and manufacturing equipment, a posture the administration described as "small yard, high fence." The Trump administration arrived in 2025 with a different instinct. This instinct did not drive the administration toward the Marshall Plan, but toward monetization. Rather than blocking NVIDIA from selling H200 chips to China, the administration announced in December 2025 that sales would be permitted in exchange for a 25% revenue fee to the US government, formalized by a BIS final rule in January 2026. Congressional Republicans responded with the AI Overwatch Act, pushing to treat advanced chip exports more like weapons sales. The administration, meanwhile, is reportedly considering rules that would require US government approval to ship AI chips anywhere outside the country.

The policy positions are oscillating between denial and extraction. Neither approach is a Marshall Plan.

What Networked Technological Leadership Actually Requires

The Marshall Plan's mechanism was integration, not charity. The European nations that received aid were required to coordinate their recovery plans, thus reducing the economic nationalism that had contributed to the war in the first place. American capital flowed in, closely followed by American institutional models, standards, and supply chain relationships. By the time the program ended in 1952, Western Europe's economy recovered and, more importantly, it had been structurally linked to American prosperity in ways that proved durable for decades.

Applied to AI competition, this logic points toward a strategy that American policy has only recently begun to attempt: building the conditions for others to succeed with American technology, standards, and institutional frameworks, rather than simply denying those conditions to China.

The Commerce Department's announcement on March 16 that it will begin accepting proposals on April 1 from industry consortia to export "full-stack AI technology packages" that include hardware, data centers, models, cybersecurity, and applications, to allied and partner nations is the closest the current administration has come to the Marshall Plan logic. The architecture is at least pointed in the right direction: affirmative, export-oriented, government-backed, designed to make American technology the default for allied AI ecosystems rather than simply blocking Chinese alternatives.

Whether it works is a different question. The program is only an announcement so far and remains untested in practice. More significantly, the administration simultaneously designated Anthropic a supply chain risk to national security after the company declined to remove usage restrictions limiting military applications of its models — a dispute that triggered Trump's order for all federal agencies to cease using Claude and set off litigation that remains unresolved. The company behind some of the world's most capable AI models is now barred from Pentagon contracts. The signal that sends to allied governments evaluating whether to build their AI ecosystems on American platforms is not encouraging: the full stack being offered may not include the most capable models, and the terms of access are subject to political disruption at any moment. The Marshall Plan's credibility rested on the consistency of the American offer. Allied governments watching the Anthropic dispute are getting a different message about what consistent looks like.

The strategic logic is not complicated. Nations that develop their AI ecosystems on American platforms, with American research partnerships, credentialed through American-influenced standards, become structurally difficult to peel away. This is not the result of coercion, but the result of the same integration logic that kept Western Europe in the American orbit long after Marshall Plan dollars stopped flowing.

This is the approach China is already taking, in reverse. Huawei builds telecom infrastructure across Africa. Chinese firms construct data centers across Southeast Asia. The Digital Silk Road offers what American policy mostly does not: an affirmative proposition, not a restriction. By the time recipient nations recognize the strategic implications of that infrastructure, the switching costs are prohibitive.

We should recognize this because we built that playbook. We just stopped running it.

Where the Analogy Breaks Down

There are, of course, limits to how effective the Marshall Plan analogy is when applied to strategic competition in AI. The postwar context was singular in ways that do not replicate. Western Europe in 1947 was devastated, desperate, and facing a Soviet threat that was effective at bringing a wide variety of players to the table. Nations that might otherwise have resisted American influence had nowhere else to go and an obvious reason to choose the American offer over the Soviet alternative. The forcing function was binary and consequential.

The developing nations that matter most for AI competition today find themselves in a different scenario. China offers infrastructure without the governance conditions that American programs typically attach. For governments less interested in democratic norms than in rapid modernization, that is a genuinely attractive proposition.

Open-source AI complicates the lock-in logic considerably. The integration dependencies that made the Marshall Plan's economic linkages durable assumed American technology would remain the scarce, proprietary resource around which ecosystems organized. In 1952, you could not simply download a competing industrial base. In 2026, Meta's Llama, France's Mistral, and China's DeepSeek are free and capable. The moment a compelling open-source AI stack exists, the ecosystem dependency logic weakens substantially. Nations do not need to accept American platform terms if capable alternatives are publicly available.

Congress has no appetite for this. The Marshall Plan required sustained political will, public argument, and a President willing to attach his name to a program he knew would be used against him politically. There is no current political coalition for large-scale American AI investment abroad. The domestic politics of technology competition run almost entirely toward restriction: who can we deny, what can we block, what can we claw back. The positive-sum frame that made Marshall's program possible is largely absent from contemporary debate.

And perhaps most importantly, the Marshall Plan had a clear endpoint. Four years, defined appropriations, measurable recovery benchmarks. Networked technological leadership in AI competition has no obvious end point. AI capabilities are evolving faster than any assistance program can track, the competitive landscape shifts with each major model release, and "success" is nearly impossible to define in advance. That is not a reason to abandon the approach, but it is a reason to be clear-eyed about what sustained commitment actually requires.

The Series in Full

This is the seventh and final installment of the Grand Strategy for AI Competition series. The earlier pieces worked through five other strategic options: Preserve Democratic Technological Autonomy, Resilience Over Dominance, Competitive Pluralism, Technological Interdependence, Innovation Ecosystem Dominance, and Standards and Governance Leadership.

The series moved from defensive options toward increasingly offensive ones. Networked Technological Leadership is the most demanding option. It requires the most resources, the most sustained political will, and the most willingness to make affirmative arguments for American leadership rather than simply restrictive ones. The Marshall Plan analogy is imperfect, as all historical analogies are. But its core insight has not aged: the most durable strategic advantages are the ones that others choose to participate in, not the ones imposed through denial.

George Marshall understood that. The question is whether American strategy in the age of AI will find its way back to that same clarity, or continue reaching for restriction as a substitute for the harder work of building something worth joining.

What This Means For...

Policymakers: Denial strategies have a ceiling. Export controls can slow a competitor's development; they cannot build the institutional relationships, shared standards, and integrated supply chains that create durable strategic advantage. A Marshall Plan for AI would require appropriations, political courage, and sustained attention across administrations. The absence of all three is a policy choice with consequences.

U.S. strategic competition: China's Digital Silk Road is running the Marshall Plan logic in reverse. They're building dependencies through affirmative infrastructure investment rather than restriction. Every nation that builds its digital infrastructure on Chinese platforms is a nation that will face structural pressure to align with Chinese standards, data governance norms, and political preferences. The window for an affirmative American counter-offer narrows each year that window goes unaddressed.

Tech companies: The allied AI ecosystem that networked leadership would create is also a market. American firms that participate in allied capacity building through cloud partnerships, research collaboration, and standards engagement position themselves in relationships that generate returns well beyond initial investment. The Marshall Plan made American consumer goods the default across a recovering Europe. An AI equivalent would do the same for American platforms.

Aspiring strategic thinkers: Marshall's Harvard speech is worth reading in full. Fifteen minutes of remarks, no enemy named, a problem framed in human terms rather than geopolitical ones, and a logic that proved more durable than any weapon system of the era. That is what strategic clarity looks like. It provides a useful benchmark for comparison to what passes for AI strategy today.