AI Competition Needs Grand Strategy: Here's What That Means

All views in this newsletter are my own and do not represent the views of The R Street Institute, the US Navy, or any other organization I am affiliated with.

Right off the bat, I'm going to apologize to you, the reader. This is much longer than my usual newsletter, but I got on a roll and couldn't find a stopping point!

Tonight I was sitting at the welcome kickoff for The Ashby Workshops hosted by Fathom and heard a critique of the "winning the AI race" analogy. Now I have heard this before and agree with it. Races have beginnings and ends while technological competition is an infinite game. For some reason, this idea became lodged in my head. I couldn't shake off this idea simply by agreeing with it and I felt the need to explore it even further.

I began thinking about how, if unleashing AI is indeed so critical to the wellbeing of our nation that we even need to call upon the Space Race as an analogy to muster widespread support, that we are in need of a grand strategy more than an aphorism. From this spark of inspiration, I've outlined an extensive exploration of grand strategy in general and specific approaches one could take if they were interested in building an American AI Grand Strategy.

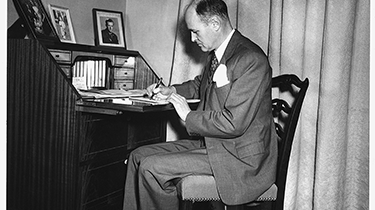

I don't want to get overly self-important here and draw direct parallels, but America's last true grand strategy emerged in a bottom-up fashion. In February 1946, a mid-level diplomat at the American embassy in Moscow sent an 8,000-word telegram to Washington that would define American strategy for the next forty-five years. George Kennan's "Long Telegram" didn't promise to win a race against the Soviet Union. It didn't call for technological superiority or industrial dominance. Instead, it outlined the political objective of preventing the Soviet model from becoming the global default while preserving freedom of action for democratic societies. To achieve this, he proposed coordinating all instruments of American power toward that end. He also pointed out inherent and self-defeating flaws in the Soviet model that were bound to fail given enough time. This became containment, America's grand strategy for the entirety of the Cold War. And it worked.

Today, when policymakers discuss AI competition with China, they reach for that Space Race metaphor. We're told we must "win the AI race," that China is "catching up" in critical technologies, that falling behind means strategic defeat. This language appears everywhere—congressional testimony, think tank reports, Pentagon strategy documents.

The race metaphor is worse than wrong: Its framing is strategically counterproductive.

Part I: Why "The AI Race" Guarantees Strategic Confusion

When we say "race," we're making specific claims about competition: there's a single finish line, speed matters most, winning is binary, and the first to cross that line achieves decisive victory. None of these assumptions apply to any form of technological competition.

Why Races Are Finite Games while Competition Is Infinite

When we call something a race, we're importing specific assumptions about competition: there's a starting line, a finish line, victory goes to whoever crosses first, and once the race is over, it's over. The Space Race fit this model. Getting a man on the moon was a discrete, measurable achievement with a clear winner. Once Armstrong planted the flag in 1969, that particular race was finished. Victory in the Space Race was important, but it did not end the competition of the Cold War.

Similarly, AI competition doesn't work this way. There's no finish line. There's no point at which we "win" and the competition ends. Even if we achieve some breakthrough like AGI, quantum-AI integration, whatever the next frontier becomes, competition will continue. Technological development is an infinite game. Success means staying in the game indefinitely, not crossing a finish line first.

James Carse's distinction between finite and infinite games is relevant here. Finite games are played to be won; infinite games are played to continue playing. Finite games have fixed rules; infinite games change rules to keep the game going. AI competition is fundamentally an infinite game, but we're approaching it with finite game thinking.

This creates three strategic errors that guarantee failure:

First, the race metaphor privileges speed over sustainability. If you're running 100 meters, going all-out makes sense because the race ends. If you're playing an infinite game, burning resources for short-term advantage while weakening your long-term position is strategic suicide. We see this in current AI policy. Regulations are racing ahead of harms and overshadowing strategic objectives, initiatives are proliferating without coordination in the private and public sectors. All of this activity without strategy creates chaos.

Second, the race metaphor treats technological achievement as victory. In infinite games, advantages are temporary. Whatever lead we gain in frontier models today, competitors will eventually match or bypass. The question isn't whether we can stay ahead for 2-3 years, but whether we can maintain the capacity to compete for 2-3 decades. That requires different capabilities entirely. We need to be seeking institutional adaptability, innovation ecosystems, alliance networks, and governance legitimacy.

Third, and most fundamentally, the race metaphor substitutes a slogan for a political objective. "Win the AI race" tells us to run faster but not where we're running or why. What's the actual end state we're pursuing? Maintaining military superiority? Economic competitiveness? Preserving democratic governance models? Preventing authoritarian surveillance states from exporting their systems? These require different strategies with different instruments of power coordinated in different ways.

Without a defined political objective, we can't coordinate instruments of national power. DoD focuses on military AI applications. Commerce focuses on export controls. State focuses on tech diplomacy. Congress throws money at various initiatives. Nobody's orchestrating these into coherent strategy. These aren't new problems, we've been here before.

What Grand Strategy Actually Means

British military theorist Sir Basil Liddell-Hart built on the theoretical work of Clausewitz and Sun Tzu to develop the theory of grand strategy. He recognized that long-term competition between great powers requires more than military strategy or foreign policy. It requires orchestrating all instruments of national power (diplomatic, information, military, and economic) toward political objectives in both peace and war.

This framing matters because the level of strategy determines what tools you can use and how you evaluate success:

- Tactical = immediate actions and responses (winning battles with available forces).

- Operational = medium-term campaigns (winning wars through coordinated battles).

- Strategic = long-term position and influence (achieving political objectives through military means).

- Grand Strategic = coordinating all instruments of power across all those levels toward political ends.

Most current AI policy operates at the tactical level. Sprinkle some export controls here, allocate some CHIPS Act funding there, spin up some random DoD AI initiatives somewhere else. Applying individual tools without coordination toward undefined objectives is improvisation, not strategy (let alone grand strategy).

Grand strategy requires three elements:

Clear political objective - What specific end state are we pursuing? Not "win" but what does success actually look like?

Coordination mechanisms - How do different instruments of power actually work together toward that objective?

Sustainability over time - Can we maintain required effort and resources across administrations, through setbacks, for decades?

When Kennan wrote the Long Telegram, he wasn't proposing better weapons or faster industrial production. He was proposing grand strategy: a clear political objective (containment of Soviet expansion) with the patient coordinated employment of all instruments of national power to achieve it.

The Case Study That Matters—Why Containment Succeeded

Containment lasted forty-five years, survived both political parties and multiple administrations, endured strategic setbacks from Korea to Vietnam, and ultimately achieved its political objective. Understanding why offers criteria for evaluating any alternative grand strategy.

It Had a Clear Political Objective

Kennan's formulation was precise: prevent Soviet totalitarian ideology from expanding while maintaining the vitality of Western democratic societies. The objective wasn't "defeat the Soviet Union" or "win the Cold War" (those came later and were arguably counterproductive). The initial objective was preventing a bad outcome (Soviet domination of Europe and Asia) while preserving freedom of action for democracies.

This clarity enabled coordination. The Marshall Plan ($13 billion in economic assistance, roughly $173 billion in 2024 dollars) made strategic sense because it served containment's political objective. It would prevent the economic collapse in Europe that would enable Soviet expansion. NATO made sense for the same reason. Cultural exchanges, Radio Free Europe, trade policy. All these efforts were coordinated instruments serving the same end.

It Aligned With Democratic Institutional Strengths

Containment worked because it leveraged what democracies do well: patient coalition-building, economic interdependence, ideological appeal, institutional resilience. It didn't try to out-Stalin Stalin by matching Soviet centralized control. It didn't assume American industry could be commanded like Soviet five-year plans.

Instead, containment created conditions where democratic advantages compounded over time. The Marshall Plan rebuilt European economies while creating integrated markets. NATO provided collective security while fostering military interoperability. Cultural diplomacy exploited the appeal of democratic governance.

This is critical: containment's strength came from aligning strategy with institutional reality. Soviet advantages in centralized mobilization didn't matter as much over decades as democratic advantages in innovation, adaptation, and voluntary cooperation.

It Was Sustainable Over Decades

Containment survived because the political objective remained relevant and the required resources remained acceptable to democratic publics across multiple administrations. It evolved, incorporating nuclear deterrence and becoming more militarized than Kennan intended. It expanded geographically. In the end, containment maintained strategic coherence.

It Coordinated Multiple Instruments of Power

NSC-68, the 1950 strategy document that operationalized containment, explicitly called for integrating political, economic, military, and psychological efforts. When the Soviet Union tested its first atomic bomb, the response wasn't just military (accelerate nuclear weapons development) but coordinated across instruments. We strengthened NATO, boosted European defense spending, and intensified economic integration.

It Adapted While Maintaining Strategic Coherence

Containment evolved significantly from Kennan's original formulation yet the core political objective—preventing Soviet expansion while maintaining democratic vitality—remained constant enough to guide adaptations. This mattered because adversaries adapt. The Soviet Union tried military intimidation, ideological subversion, economic competition, proxy wars, arms races. Containment's resilience came from maintaining strategic coherence while adjusting tactics.

The Verdict

Current AI policy fails nearly every test that containment passed. It has no clear political objective beyond "winning" a metaphorical race. It fights against democratic institutional strengths rather than leveraging them. It is not sustainable across administrations or even within single terms. It lacks coordination mechanisms to integrate instruments of power. It cannot adapt because there's no strategic coherence to maintain while adapting.

None of these failures come from poor execution. Instead, they come as the result of an absence of strategy. We're treating long-term strategic competition like a series of tactical problems requiring immediate responses. We're assuming that activity can substitute for strategy.

It doesn't. It never has. Containment worked not because American policymakers were more active than Soviet planners, but because they coordinated all instruments of power toward a clear political objective that aligned with democratic strengths and could be sustained for decades.

We need alternatives. Not just different policies, but different grand strategic frameworks that could actually meet the tests containment passed.

Evaluation Criteria for AI Grand Strategy

Containment's success suggests five criteria for evaluating any proposed grand strategy for AI competition:

1. Clear Political Objective Not "win the AI race" or "achieve AI dominance." What specific end state are we trying to achieve? What does success look like in 20 years? How do we know if we're succeeding?

2. Institutional Alignment Does the strategy leverage democratic institutional strengths (innovation ecosystems, alliance networks, voluntary cooperation) or try to match authoritarian advantages (centralized direction, industrial policy, forced coordination, rapid resource mobilization)?

3. Sustainability Over Decades Can democratic publics support required resources and accept necessary costs across multiple administrations? Or does it require permanent crisis mobilization that will exhaust political will?

4. Coordination Mechanisms How do diplomatic, economic, military, and informational instruments actually work together? What institutions or processes enable coordination across government?

5. Adaptive Resilience Can the strategy evolve as China adapts its approach, as technology changes, as new competitors emerge, while maintaining strategic coherence?

Current AI policy fails most of these criteria. The political objective beyond "winning" remains undefined or contested. Approaches often fight against democratic institutional strengths. Sustainability is questionable given political volatility. Coordination mechanisms across government are weak or nonexistent. Adaptive resilience is untested.

We need alternatives.

Eight Grand Strategies to Consider

Over the coming weeks, this newsletter will systematically examine eight alternative grand strategies for AI competition (I told you I was on a roll!). Each represents a distinct political objective with different approaches to coordinating instruments of power. I'll evaluate each against the criteria derived from containment's success, examining historical precedents for when similar approaches succeeded or failed.

Defensive/Preservationist Approaches:

- Preserve Democratic Technological Autonomy - Ensure democracies retain sovereign capability to develop and govern AI according to their values.

- Resilience Over Dominance - Maintain capacity to adapt faster than competitors over time.

Balance/Pluralist Approaches:

- Competitive Pluralism in AI - Prevent any single actor from achieving hegemonic control over AI development pathways.

- Technological Interdependence - Create dependencies that make decoupling strategically costly.

Offensive/Leadership Approaches:

- Innovation Ecosystem Dominance - Make democratic innovation ecosystems so valuable that restricting access becomes China's strategic vulnerability.

- Standards and Governance Leadership - Control international frameworks and norms that determine how AI is deployed globally.

- Networked Technological Leadership - Make alliance networks the structural advantage.

Asymmetric Approaches:

- Advantage Through Openness - Treat democratic transparency and open research as competitive advantage rather than vulnerability

Each newsletter will examine one or two frameworks in depth, exploring historical precedents, core political objectives, institutional alignment, implementation requirements, and evaluation against our criteria. The goal isn't to pick one perfect strategy. I am hoping to spark a conversation and perhaps we'll discover that some are ends while others are means, some work for certain domains while others fit different timeframes.

The goal is to move beyond "AI race" rhetoric toward actual grand strategic thinking.

What This Series Will Not Do

This isn't another hot take on whether AI will replace jobs or destroy humanity. It's not technical analysis of frontier models or breathless coverage of the latest AI lab drama. It's not policy wonkery about specific export control regulations.

This is strategic analysis using frameworks from military history and strategic theory to evaluate how democracies can compete effectively in long-term technological competition. If you want to understand why China's approach to AI might have systemic vulnerabilities despite its apparent advantages, why alliance coordination might matter more than compute superiority, why some forms of openness create competitive advantages rather than security risks, then this series will be for you. If you're looking for predictions about AGI timelines or takes on the latest AI safety debate, you'll be disappointed.

Why This Matters Now

Right now, American AI policy is improvising. We're treating a long-term strategic competition like a series of disconnected tactical problems. We're assuming speed and technological superiority will substitute for strategic clarity. We're using race metaphors that guarantee strategic incoherence.

Kennan understood something in 1946 that we've forgotten: activity without strategy wastes resources while creating vulnerability. The race metaphor generates plenty of activity. Lots of congressional hearings, funding bills, regulatory proposals, strategic initiatives. It fails to generate strategy.

We can do better. We must do better.

I'm not claiming this newsletter series will become the next Long Telegram. I am claiming that grand strategic thinking about AI competition needs to happen somewhere, somehow, and waiting for it to emerge from government in our current political environment seems optimistic. So I'm starting the conversation here. And maybe, just maybe, we'll discover frameworks that move us beyond race metaphors toward actual strategy.

What This Means For...

Policymakers: "AI race" rhetoric prevents development of actual grand strategy. Every hearing, strategy document, and budget request that frames competition as a race reinforces strategic incoherence by privileging speed over sustainability, tactical achievement over strategic positioning, and activity over coordination. This series will provide frameworks for moving beyond slogans toward political objectives that enable coordination across instruments of power.

U.S. Strategic Competition: We're repeating the confusion that preceded containment's development. From 1945-1947, American policy lurched between accommodation and confrontation with the Soviet Union with no coordinating framework. Kennan provided that framework. We need equivalent strategic clarity for AI competition, or we'll waste years and resources on uncoordinated tactical responses while China pursues coherent grand strategy.

Tech Companies: Current policy incoherence reflects absence of grand strategy. Expect continued whiplash until a coherent framework emerges. Companies that understand the grand strategic options being debated can better anticipate how policy will evolve.

Aspiring Strategic Thinkers: Liddell Hart's grand strategy framework applies beyond military domains—it's about orchestrating multiple instruments toward political objectives over time in competitive environments. This matters for any complex challenge requiring sustained effort across multiple dimensions. The principles that made containment work (clear objectives, institutional alignment, sustainability, coordination, adaptability) provide a template for strategic thinking in any domain where you're playing infinite games against adaptive adversaries.